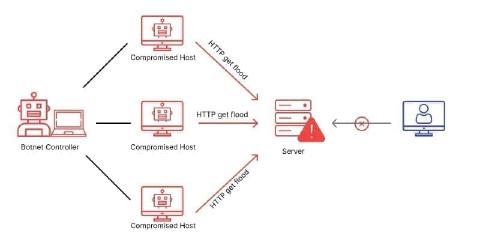

Imagine you’re training an internal language model on customer data, contracts and proprietary business information. The model delivers good results. Your team is happy. And then someone from the legal team asks: “Where are these workloads actually running?”

For many companies, the honest answer is: on AWS. Or Azure. Or GCP. That is, on servers in the USA, or on servers belonging to US companies, wherever in the world they happen to be located.

Anyone building AI infrastructure should clarify four questions early, before the project is running and data is already being processed somewhere.

1. Where is my data being processed and who can access it?

Most companies know their data lives “somewhere in the cloud”, but not exactly where, and even less who could access it if it came to that. The CLOUD Act (Clarifying Lawful Overseas Use of Data Act) is a US federal law from 2018. It requires US companies to grant US authorities access to stored data on request, even if that data is physically stored outside the USA.

This means: AWS, Microsoft Azure and Google Cloud are US companies. Their data centres in Frankfurt, Amsterdam or Zurich change nothing. Once your provider is subject to US jurisdiction, US authorities can under certain conditions demand access to your data, without your knowledge and without involving Swiss courts.

2. What exactly am I processing, and how sensitive is it?

When training a language model, data isn’t simply stored: it is processed, analysed and embedded into the model. This can include:

- Customer communications and support tickets

- Contracts and legal documents

- Medical or financial data

- Internal knowledge bases and proprietary processes

This data defines what your model can do and how it responds. It’s not just files on a server. It’s the core of your competitive advantage.

The same applies to inference and fine-tuning: even companies that aren’t training their own models but are processing customer data through an existing model are affected. When this data is processed on US infrastructure, it falls under the CLOUD Act. That’s not a hypothetical risk, it’s a structural one.

3. Can I demonstrate data sovereignty to regulatory authorities?

For companies in regulated industries, the situation is even clearer. Financial institutions subject to FINMA must be able to demonstrate data sovereignty. In healthcare, strict rules apply to the processing of sensitive personal data. For the public sector, using US infrastructure for critical workloads is often simply not permitted.

This means: many Swiss companies currently running their AI workloads on AWS or Azure are sitting on a compliance problem. Not because they’re careless, but because the alternative wasn’t on their radar.

4. Do I have a contact who understands my workload technically?

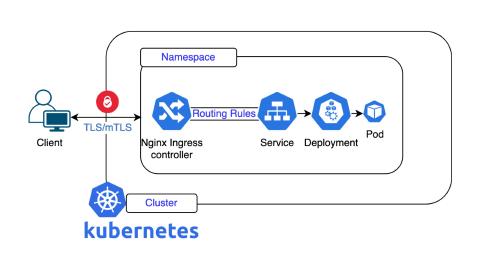

AI infrastructure is not a standard product. Requirements differ considerably depending on the workload: training large language models, fast inference, fine-tuning or RAG systems each place different demands on hardware, network and configuration.

Having to resolve these questions through an anonymous L1 ticketing system wastes time and patience. Peak Privacy, a company specialised in LLM inference, made the switch. Fabio Duò, founder of Peak Privacy, describes it like this:

“Working with Nine was a real stroke of luck. We finally found a partner in Switzerland who not only understands our specific needs for LLM inference, but also has the technical expertise to build a perfectly tailored, high-performance and competitively priced server solution. It was a game changer for us.”

What Peak Privacy describes is not an isolated case. Once you’ve worked with a team that understands dedicated AI workloads, and is directly reachable rather than hidden behind an L1 ticketing system, you don’t want to go back.

The Swiss alternative

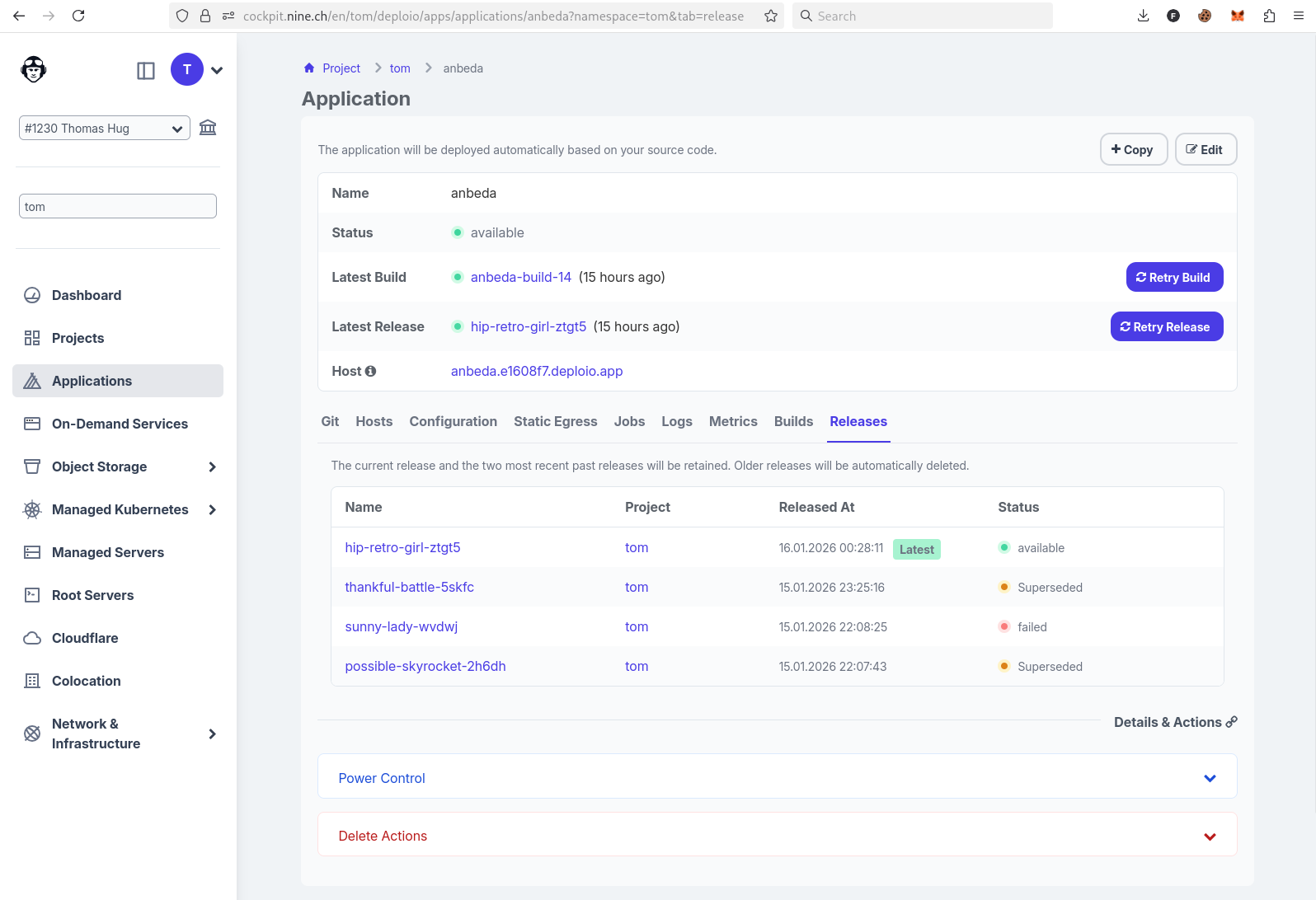

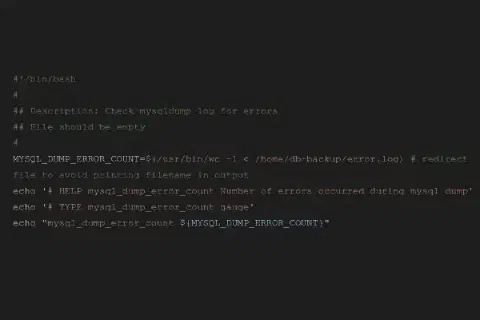

We operate dedicated Nvidia hardware in Swiss data centres (NTS Altstetten and NTT Rümlang, both running on renewable energy). No US parent company, no US jurisdiction. ISO 27001 certified since 2013.

Various GPU configurations are available, matched to the workload:

- Nvidia H100 NVL: maximum performance for training large language models

- Nvidia L40s: optimised for fast inference and productive LLM operations

- Nvidia RTX 4500 ADA: efficient for fine-tuning, RAG systems and lightweight AI applications

- Nvidia A100 40GB: proven AI GPU for demanding training and inference workloads

All configurations are dedicated: no shared resources, no unknown neighbours, real bare-metal performance.

Conclusion

AWS isn’t wrong, but it’s not the right choice for every workload. Anyone training AI models on sensitive data, working in a regulated industry, or simply wanting to retain control of their data, needs an alternative.

That alternative exists. It runs on Swiss soil, is ISO-certified and is supported by engineers you can call directly.

For a structured starting point: the AI Compliance Checklist for Swiss Companies – covering the most important points on a single page, free to download.

Ready to find the right stack for your AI workloads? Book a consultation now.